A·R·D* or letting geometry listen

Written on March 26th, 2026 by Dialekti Valsamou-Stanislawski

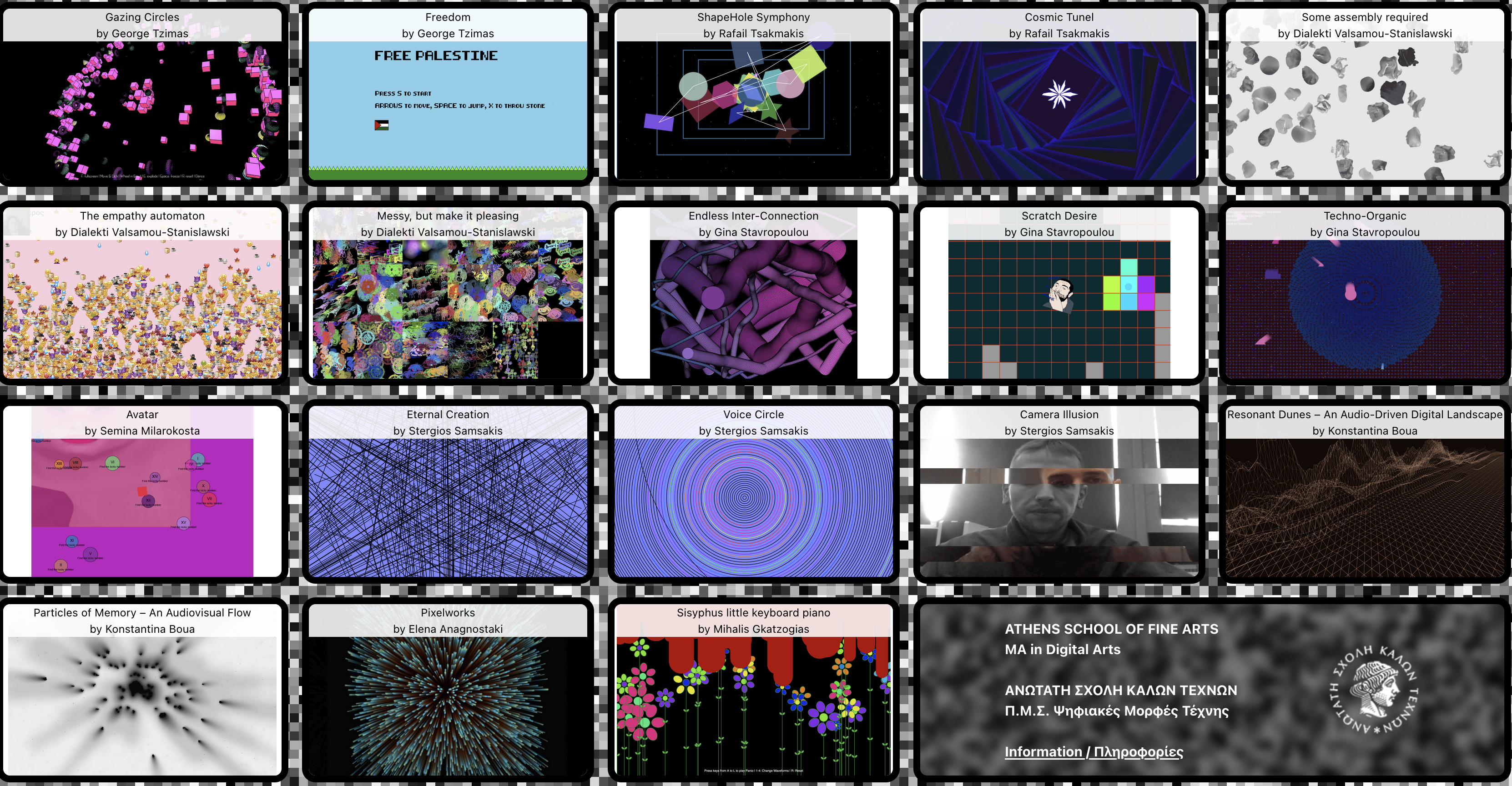

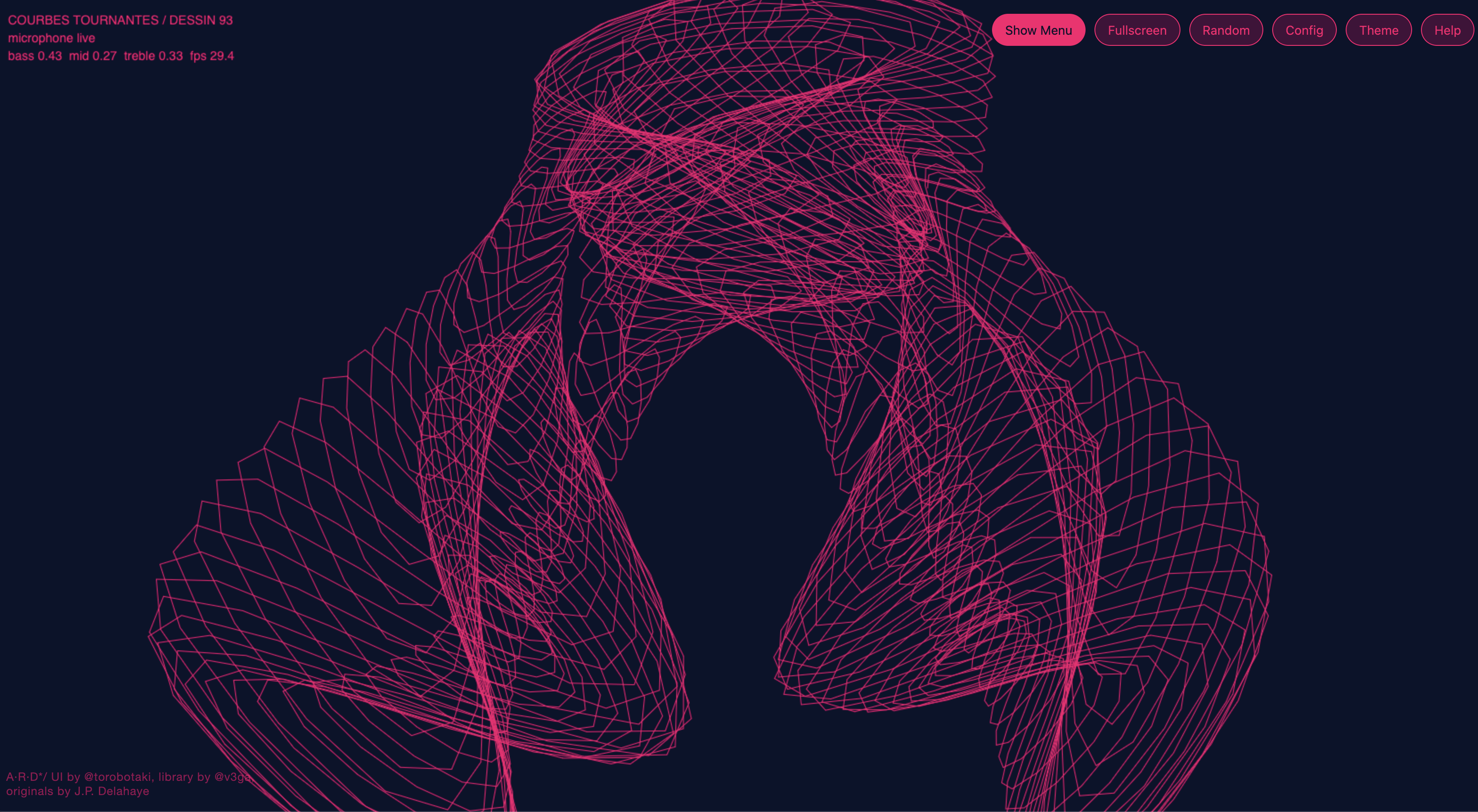

An open-source audio-reactive visualisation tool built from geometric drawings I really like

The context

I like experimenting with visuals, and for a while I had wanted to build a visual tool that is both useful and pleasant to look at.

I kept coming back to the geometric drawing families from Jean-Paul Delahaye’s Dessins géométriques et artistiques, and to @v3ga’s p5.js recoding of them. I like their mathematical elegance, their historical lineage, and the fact that this material already exists in an open-source ecosystem that can be reused and extended.

So I made A·R·D*, short for Audio-Reactive Dessins Geometriques et Artistiques: a browser-based visualisation tool that lets these drawing families react to microphone input, system audio, or any routed sound source the browser can access.

What it is

In plain English, A·R·D* lets you:

- choose one or several drawing families sourced from the original works (1, 2)

- layer them on the same canvas,

- route different audio bands to different visual parameters,

- tune color, speed, opacity, scale and detail live,

- export a composition and bring it back later.

It is less a single sketch and more a small visual tool for exploration and live use.

There is a Random entry point for quick discoveries, but also a full configuration panel when you want to become precise, picky, or obsessive.

What the machine does

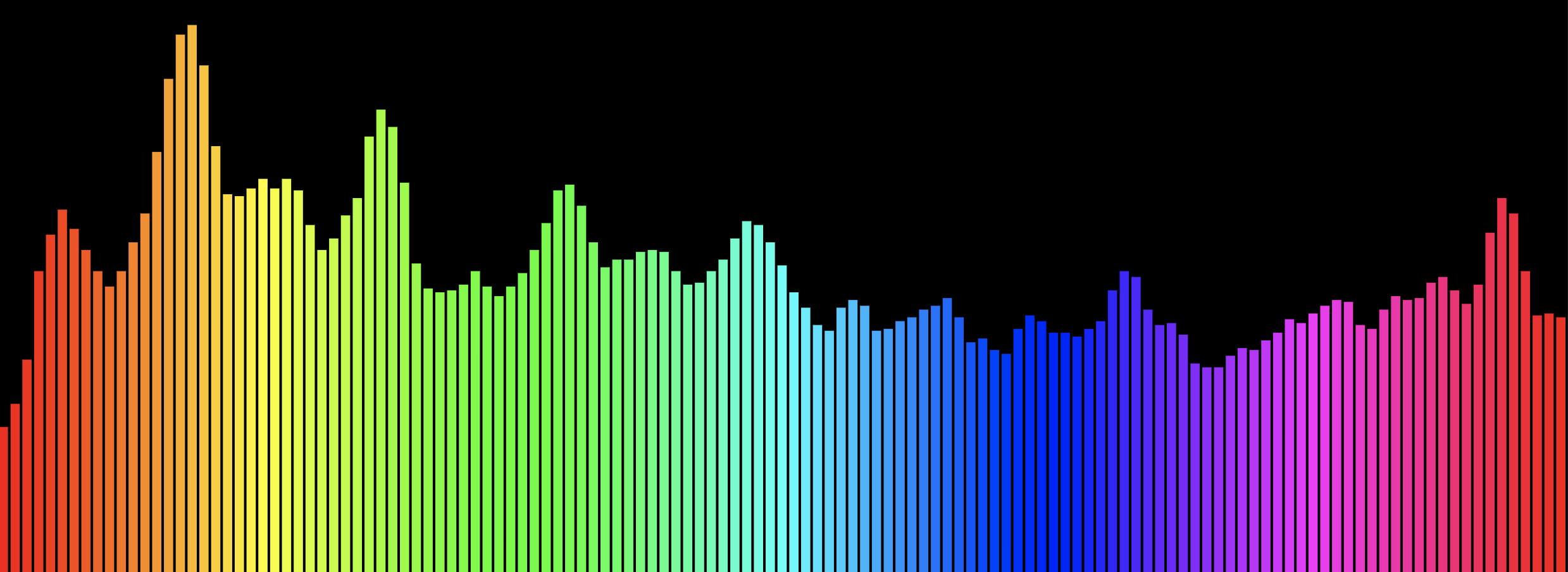

1. It listens

The browser captures incoming sound and splits it into rough bands: bass, mid, treble, plus an overall level. These are then smoothed so the image does not jitter too much from one frame to the next.

2. It distributes attention

Each drawing can react to one global source, or use a more detailed routing setup where size, speed, detail and color each listen to different parts of the sound.

This is one of the things I like most in the project: it is not just “sound makes image bigger.” Different parts of the image can respond to different parts of the sound, and in Advanced mode everything is configurable.

3. It reinterprets old geometries as live behavior

The original drawing families were not designed as audio-reactive visuals. So part of the work was not only connecting sound to parameters, but also adjusting families and presets so they behave well in real time.

Some work immediately. Others need more care.

4. It remembers

Compositions can be exported and imported as full scene configurations, including drawing choices, parameter values and theme information. This turns improvisation into something you can revisit instead of losing forever.

Why I made it

The main reason is straightforward: I wanted an open-source, free, audio-reactive visualisation tool that I could use myself and that other people could also use, modify, or improve.

I also genuinely like these visuals. I like their aesthetic, clean and, well, geometric; I like where they come from; and I like the idea of extending that lineage instead of building something disconnected from it. On a practical level, this also meant I did not have to start from nothing, which might be less “artistic” but is much more efficient.

It also matters to me that the project stays open and reusable. There is a lot of good visual work trapped inside closed tools or one-off pieces. I wanted this to be something people can actually run, study, adapt, and build on.

Try it

If you want to test it:

- live version: https://dvalsamou.me/ard/

- source (to get it and run it locally): https://github.com/torobotaki/ard